- Why Facebook Ads Traffic Fails to Convert

- What Is Facebook Landing Page A/B Testing

- What Should You Test First on a Facebook Landing Page

- How to Set Up a Facebook Landing Page A/B Test on Shopify

- How Long Should You Run a Facebook Landing Page Test

- From Experiment to Scale: Ready to Turn Winners into Profitable Campaigns

- FAQs about Facebook Landing Page A/B Testing

Your Facebook ads are getting clicks, but not sales. The problem might not be your targeting, creative, or budget. It’s your landing page. Most Shopify brands obsess over ad performance while ignoring what happens after the click. That’s where conversions actually happen, or die.

Today, let’s go through how to run Facebook landing page A/B testing the right way, improve ROAS without touching your ads, and turn paid traffic into measurable revenue growth.

Why Facebook Ads Traffic Fails to Convert

You launch a Facebook campaign, and the early numbers look promising. CTR is strong, CPC is stable, and traffic is coming in consistently. On paper, everything suggests the campaign should work.

But revenue tells a different story.

Sales don’t scale, the ROAS stalls, and suddenly the instinct is to tweak the ad: change the creative, test a new hook, and expand targeting. What rarely gets questioned is the experience after the click.

That’s where conversions are actually won or lost.

High CTR Doesn’t Mean High Revenue

A click only proves one thing: your ad captured attention. It doesn’t prove your landing page can convert that attention into buying intent.

Facebook’s algorithm is excellent at driving engagement, especially when your creative resonates. But once users leave the platform and land on your Shopify page, the responsibility shifts entirely to your site. If the value proposition isn’t immediately clear, if the layout feels cluttered, or if the CTA lacks urgency, momentum drops within seconds.

This gap between attention and persuasion is where many brands struggle. They interpret strong CTR as campaign success when in reality, conversion rate is the metric that determines profitability. That’s why serious Shopify conversion rate optimization efforts always look beyond the ad itself and into post-click behavior.

The Message Match Problem

One of the most common causes of poor conversion is message mismatch. Your ad might highlight a bold offer, a strong benefit, or a specific pain point. But when users land on the page, they’re met with generic headlines or broad brand messaging that doesn’t reinforce what brought them there in the first place.

That subtle disconnect creates hesitation. Users subconsciously question whether they’re in the right place. Friction increases, bounce rate climbs, and trust weakens before the product even has a chance to sell.

For stores trying to reduce bounce rate on Shopify, tightening the alignment between ad messaging and landing page content is often the fastest performance win.

The Post-Click Blind Spot in Most Facebook Campaigns

Many performance reports focus almost exclusively on ad-level metrics like CTR, CPC, and ROAS. These numbers matter, but they don’t explain user behavior once traffic hits the site.

What happens after users scroll? Where do they pause? Which sections get ignored? How many reach the CTA but don’t click? Without structured analysis, these questions go unanswered.

Landing pages function as conversion funnels. Each section either moves the user closer to purchase or quietly pushes them away. Without testing and structured e-commerce optimization, most changes are based on design preference or instinct rather than data.

Shopify Stores Often Misdiagnose Conversion Issues

When ROAS declines, the default assumption is usually audience fatigue or creative burnout. Budgets are adjusted, targeting is refined, and new ads are launched. Performance fluctuates, but the underlying issue often remains untouched.

The more strategic question isn’t “Which ad should we try next?” but “Is this landing page doing its job?”:

-

Are users clearly guided from headline to offer to CTA?

-

Is the mobile experience frictionless?

-

Is the value proposition strong enough above the fold?

Without systematic A/B testing, redesigns become guesswork, and guesswork is expensive when you’re paying for every click. While Facebook brings the traffic, your landing page decides whether that traffic turns into revenue.

Learn more: How to Optimize Facebook Ads Landing Pages Using Real Experiments

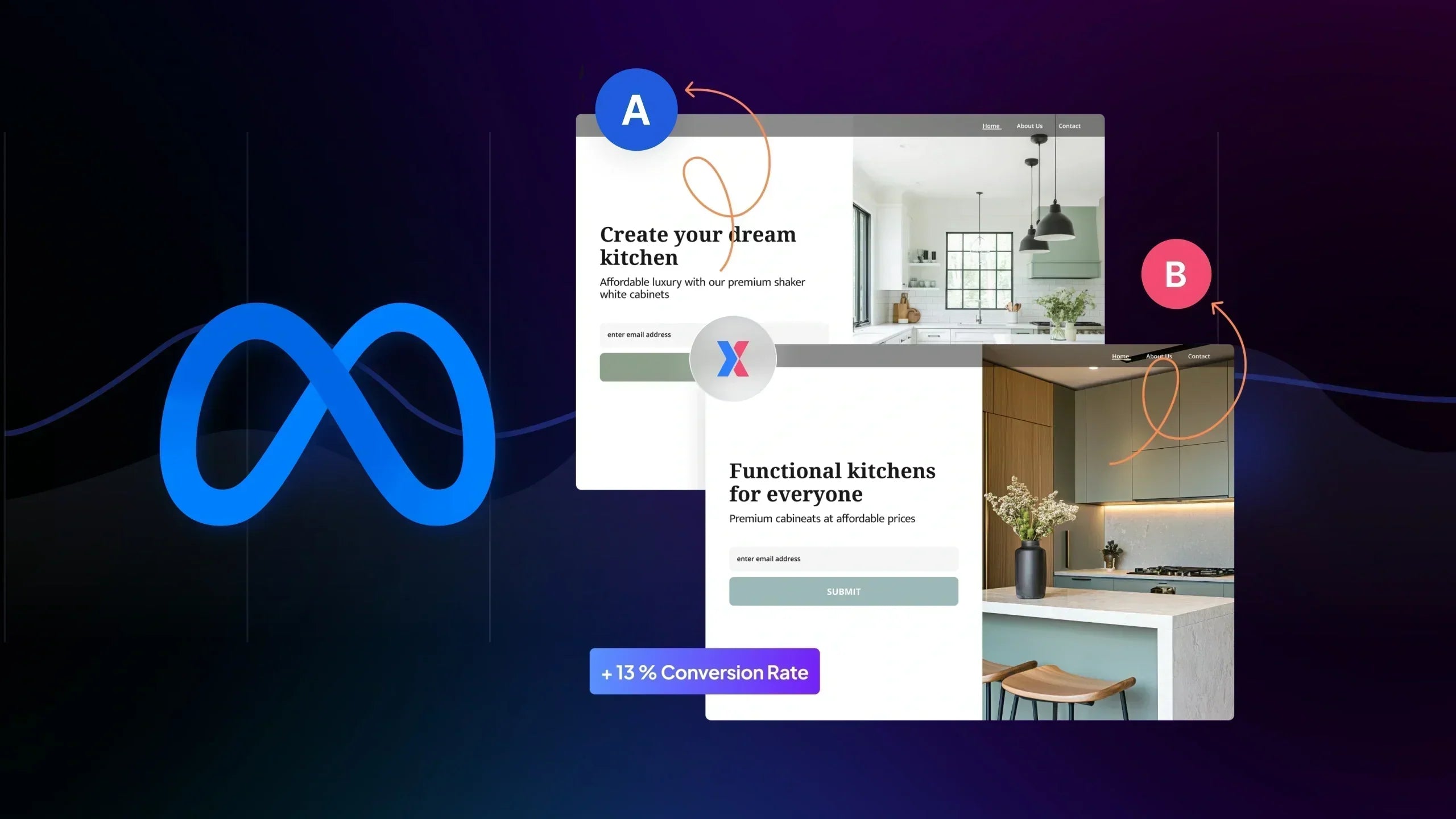

What Is Facebook Landing Page A/B Testing

Facebook landing page A/B testing is the process of sending paid traffic to two or more versions of the same landing page to determine which version generates more conversions.

Instead of guessing whether a headline, offer, or layout works better, you split traffic evenly between variations and measure performance using a defined metric, usually conversion rate, revenue per visitor, or add-to-cart rate.

Your ad stays the same. Your traffic source stays the same. Only the landing page changes.

That isolation is what makes the test reliable.

If you're new to structured experimentation, this follows the same principles as A/B testing on Shopify.

Testing Ads vs Testing Landing Pages: What’s the Difference

When you test Facebook ads, you’re optimizing:

-

Creative

-

Hook

-

Targeting

-

Format

When you test landing pages, you’re optimizing:

-

Messaging clarity

-

Offer framing

-

Page structure

-

CTA effectiveness

While ad testing improves click performance, landing page testing improves post-click performance. They both matter, but they solve different problems. If your CTR is strong but sales are weak, the bottleneck likely isn’t the ad. It’s the page experience after the click.

This distinction is critical in performance marketing because many brands confuse traffic optimization with conversion optimization.

Why Landing Page Tests Are More Scalable Than Ad Tests

Ad testing is inherently tied to platform dynamics. Creative fatigue sets in quickly, CPMs fluctuate, and audience saturation can distort results within days. Even when you find a winning ad, its performance often declines as delivery conditions shift.

Landing page testing works differently.

Instead of optimizing a single campaign asset, you’re strengthening the conversion foundation behind every campaign. When a landing page variation proves it can convert better, that improvement doesn’t disappear with audience changes or creative refresh cycles. It continues to perform across cold traffic, retargeting, and even new campaigns launched months later.

That’s why many experienced marketers see landing page experimentation as one of the core benefits of A/B testing. The impact compounds over time.

You’re not just improving one ad set. You’re upgrading the conversion engine that powers all of your paid traffic.

How A/B Testing Protects Paid Traffic Performance

Paid traffic is expensive, and every click costs money.

Without structured testing, most changes to a landing page are risky. A redesign might improve aesthetics but quietly hurt conversion rate. A new headline might sound stronger, but it reduce clarity.

A/B testing reduces that risk. Instead of replacing the original version outright, you compare variants under controlled traffic conditions. Performance data determines the winner. This is a foundational principle in modern A/B testing in marketing.

What Should You Test First on a Facebook Landing Page

When it comes to Facebook ads landing page optimization, most brands make one mistake: they test random elements instead of testing what actually influences buying decisions.

Not all changes are equal, some experiments move revenue, and others barely move metrics.

If your goal is to improve Facebook ads conversion rate, you should start with the elements that shape first impressions, reinforce trust, and guide users toward action. That usually means focusing on three areas: above-the-fold clarity, persuasion elements, and structural flow.

Above-the-Fold Optimization

The above-the-fold section is where post-click optimization begins. Users decide within seconds whether to stay or leave. If this section fails, nothing below it matters.

Hero Headline (Value Proposition Clarity)

Your headline should immediately answer one question: Why should I care?

Facebook traffic is colder than search traffic. Users didn’t actively search for your product. They clicked because something interrupted their scroll. When they land, clarity beats creativity.

Test variations that:

-

Reinforce the ad promise

-

Emphasize a core benefit over a feature

-

Address a specific pain point

For example, compare: “Premium Skincare for Everyday Use” vs. “Clinically Tested Formula That Reduces Acne in 14 Days”.

That’s not just copy testing. That’s intent alignment.

In structured Shopify landing page testing, headline clarity often produces one of the fastest conversion lifts because it directly impacts bounce behavior.

Offer Framing (Discount vs Bundle vs Free Shipping)

Sometimes the product isn’t the problem. The way the offer is presented is.

You might test:

-

Percentage discount vs fixed amount

-

Bundle savings vs single-item promotion

-

Free shipping threshold vs automatic discount

These experiments influence perceived value more than most visual tweaks. In e-commerce A/B testing, offer framing frequently changes revenue per visitor more significantly than button color ever will.

The key is to test one framing mechanism at a time so you can clearly measure impact on conversion rate or average order value.

CTA Copy & Placement

Your call-to-action is the decision trigger. If it feels passive, generic, or poorly positioned, users hesitate.

Testing CTA variations can include:

-

“Buy Now” vs “Get Yours Today”

-

Single primary CTA vs repeated CTAs throughout the page

-

Button placement closer to social proof blocks

CTA experiments are often underestimated, but small wording changes can improve micro-conversions significantly.

Learn more: For a deeper framework, learn more about CTA testing.

If you’re looking for broader experimentation inspiration, review these A/B testing ideas for ecommerce.

Trust & Persuasion Elements

Once users understand the offer, the next barrier is trust.

Facebook traffic is interruption-based. Users are skeptical by default. If your landing page doesn’t build credibility quickly, conversion stalls.

Social Proof Placement

It’s not just whether you have testimonials, it’s where you place them.

Testing social proof above the fold versus mid-page can significantly affect engagement depth. When reviews appear immediately after the value proposition, they reinforce credibility before doubt sets in.

Reviews & UGC Blocks

User-generated content adds authenticity that brand copy cannot replicate.

You can test:

-

Static testimonials vs image-based reviews

-

Short quotes vs detailed stories

-

UGC video snippets vs written feedback

For brands focused on increasing ecommerce sales, these persuasion-layer experiments often produce measurable lifts by reducing purchase anxiety.

Trust Badges & Guarantees

Money-back guarantees, secure checkout badges, and shipping transparency signals may feel minor, but they address hidden objections.

Instead of assuming they work, test variations:

-

Guarantee badge near CTA vs near pricing

-

30-day vs 60-day framing

-

Icons vs text explanations

Small trust adjustments often unlock higher add-to-cart rates, especially for first-time visitors.

Structural & UX Experiments

After messaging and persuasion, structure becomes the performance lever.

Long-Form vs Short-Form Landing Pages

There’s no universal rule for page length. Some products need storytelling. Others convert better with minimal friction. Testing long-form versus short-form layouts is a classic example of landing page A/B testing.

For higher-ticket items, longer pages that educate and handle objections often perform better. For impulse purchases, shorter formats may win. The only reliable answer comes from controlled experimentation.

Video vs Static Hero

Video can increase engagement, but it can also slow load time or distract from the primary CTA.

Consider to test:

-

Autoplay video vs Static hero image

-

Explainer-style video vs Testimonial video

-

Video above fold vs Mid-page

These experiments directly impact post-click engagement and are common in landing page testing for paid traffic.

Sticky Add-to-Cart vs Standard CTA

Mobile behavior changes everything. Sticky CTAs can reduce friction, especially for users who scrolling. However, they can also feel aggressive or cluttered.

Testing sticky add-to-cart functionality is often part of broader funnel testing strategies, where micro-conversion improvements compound into higher overall revenue.

If you’re serious about improving your Facebook ads conversion rate, don’t start with random redesigns. Start with structured experiments in these three areas. They influence clarity, trust, and action, the three pillars of profitable post-click optimization.

How to Set Up a Facebook Landing Page A/B Test on Shopify

If your goal is to improve the Facebook ads conversion rate, the setup process needs to be clean and controlled. A messy test gives messy data. Here’s the exact framework you should follow.

Step 1: Lock Your Facebook Ads

Before touching the landing page, freeze your ads.

Do not:

-

Change creatives

-

Adjust targeting

-

Modify budget

-

Duplicate ad sets

Facebook’s algorithm constantly optimizes delivery. If you change ads while testing your page, you introduce a second variable. At that point, you won’t know whether performance changed because of the landing page or because of traffic quality.

For accurate landing page testing for paid traffic, your traffic source must remain stable.

Step 2: Create Two Clear Page Variations

Duplicate your existing landing page inside Shopify.

-

Version A: the Control (current page)

-

Version B: one meaningful change

That change should be strategic, not cosmetic. For example:

-

New headline that reinforces the ad promise

-

Different offer framing

-

Repositioned CTA

-

Short-form layout vs long-form layout

Do not redesign everything at once. Split testing landing pages only works when you isolate one key variable.

If your experiment affects structure across the full funnel (for example, testing a pre-sell page before product checkout), you may need multipage testing.

If you’re testing layout or messaging within the same template, use template testing.

Pro tip: Choose the format that matches your funnel structure, and don’t overcomplicate it.

Step 3: Split Traffic 50/50

Now distribute traffic evenly between Version A and Version B.

A 50/50 split gives both variations equal exposure under the same ad conditions, which is essential if you want reliable data. When traffic is uneven, one version may collect data faster while the other lags behind, slowing down learning and potentially skewing results, especially in paid campaigns where performance can fluctuate daily.

Equally important is true randomization. Traffic must be assigned randomly so that high-intent users, returning visitors, or specific device segments don’t unintentionally cluster into a single version. Without proper random allocation, what looks like a “winner” may simply be the result of biased distribution rather than a real improvement in conversion rate.

If you’re running structured Shopify landing page testing, this traffic control layer isn’t optional, it’s foundational. Without clean split traffic and controlled randomization, you’re not running a real experiment. You’re just comparing two URLs and hoping for clarity.

GemX handles randomized traffic allocation automatically, maintaining clean 50/50 splits without duplicate URL issues, manual redirects, or fragile workarounds. That means you can focus on interpreting results instead of worrying about whether your data is trustworthy.

Step 4: Choose One Primary Metric

Before launching the test, define what success means.

If your goal is to improve your Facebook ads conversion rate, use the conversion rate (CR). In case your goal is profitability, look at the revenue per visitor (RPV).

Do not change the success metric mid-test. That leads to biased interpretation.

For clarity on measurement logic, review A/B testing metrics, so your team aligns on evaluation criteria from the start.

Learn more: Does a Higher Conversion Rate always means Winner

Step 5: Let the Test Run Without Interference

Once your landing page testing is live, do nothing: no copy editing, layout adjustment, pausing the experiment, or panicking after a few days. Just let your test run. Paid traffic fluctuates daily, and early performance swings are normal.

Let data accumulate before concluding. When enough sessions are collected, review performance and properly analyze A/B testing results instead of guessing based on short-term spikes.

When executed this way, Facebook landing page A/B testing becomes a controlled growth mechanism, not a risky redesign gamble.

You’re not “trying a new page”, you’re now validating which version converts paid traffic more profitably.

How Long Should You Run a Facebook Landing Page Test

The honest answer? Long enough to reach reliable data, not long enough to waste ad spend. Test duration in Facebook landing page A/B testing depends on traffic volume, baseline conversion rate, and expected lift. Let’s break it down with numbers.

Sample Size Basics for Paid Traffic

Before asking “How many days?”, try to ask “How many conversions?” first. For most Shopify stores running paid traffic, you should aim for:

-

Minimum 100 conversions per variation

-

Absolute floor: 30–50 conversions per variation (only for directional insights)

Example 1: If your landing page converts at 2%, that means:

-

100 conversions require ~5,000 visitors per variation

-

With 1,000 visitors/day total (500 per variation), you’ll need ~10 days

Example 2: If your page converts at 5%, then:

-

100 conversions require ~2,000 visitors per variation

-

With 1,000 visitors/day total, you’ll need ~4 days

The lower your conversion rate, the longer your test must run.

This is why high-ticket or low-volume stores often need 2–3 weeks for reliable landing page testing for paid traffic. If you stop at 10 conversions per version, you’re not testing, you’re just guessing.

Why Stopping Tests Too Early Kills ROAS

Here’s a common mistake:

-

Day 2: Version B is +25%

-

Day 3: Version A catches up

- Day 4: Panic. Test paused.

Short-term swings are normal because Facebook traffic fluctuates daily. Weekday vs weekend behavior alone can shift conversion rate by 10–20%.

If you want stable results:

-

Run tests for at least one full business cycle (7 days minimum)

-

Ideally, 10–14 days for consistent paid traffic

Even if you hit statistical significance early, letting the test run through different traffic patterns improves reliability.

This is one of the most common A/B testing mistakes,stopping when the numbers look exciting instead of when they’re stable.

Statistical Significance vs Business Significance

Statistical significance typically means reaching 95% confidence that one variation outperforms the other.

But here’s the nuance:

Suppose Version B improves the conversion rate from 2.00% to 2.08% and reaches 95% confidence. That’s statistically significant, but probably not business significant.

Let’s do the math:

-

10,000 visitors/month

-

2% CR → 200 orders

-

2.08% CR → 208 orders

That’s 8 extra orders. If your AOV is $40, that’s $320/month.

Is this worth implementing? Maybe. Is this worth restructuring your entire funnel? Probably not.

While statistical confidence tells you the result is real, business impact tells you whether it matters. When evaluating performance, always properly analyze your testing results using both confidence level and revenue impact.

From Experiment to Scale: Ready to Turn Winners into Profitable Campaigns

Running a Facebook landing page test only creates value if you know what happens after a winner is declared.

Too many brands stop at “Version B won” and consider the job done. There’s no documentation of what changed, no structured rollout plan, and no clear hypothesis recorded for future reference. The winning variation gets pushed live, but the reasoning behind it disappears.

Then a month later, someone redesigns the page again with a new layout, new copy, and new assumptions. And the original learning? Gone.

That’s not experimentation. It’s random iteration disguised as optimization.

Real experimentation means building institutional memory, so each test compounds insight instead of resetting the baseline every time.

Apply the Winning Template Across Campaigns

When a variation clearly improves conversion rate or revenue per visitor, it shouldn’t stay isolated to one campaign. Winning messaging, layout structure, or offer framing should be rolled out across similar traffic segments.

If you’re running multiple ad sets, seasonal campaigns, or retargeting funnels, the validated version becomes your new control.

This is where structured Shopify split testing starts compounding results instead of creating one-off wins.

Scale Ad Spend Safely

A validated landing page reduces risk when increasing the budget. Instead of scaling ads blindly and hoping performance holds, you’re scaling into a proven conversion environment.

That’s a fundamental shift.

Instead of redesigning blindly every month, brands using GemX: CRO & A/B Testing to build a repeatable testing loop: launch, validate, apply winner, and scale ads confidently.

Create a Continuous Testing Loop

The real growth lever isn’t a single experiment. It’s consistency.

Sustainable performance doesn’t come from one winning variation, it comes from a structured system:

-

Document what you tested

-

Record the measurable impact

-

Clarify why the variation worked.

Then prioritize the next hypothesis based on data, not intuition. Over time, this becomes an intentional testing loop instead of a series of disconnected changes.

Build an experiment roadmap so experimentation becomes a process embedded in your growth strategy, not a reaction every time ROAS dips.

If you’re running Facebook ads on Shopify, your next growth lever isn’t another creative refresh. It’s controlled experimentation applied to the place where conversion actually happens.

Start with your landing page!