- What Is Email A/B Testing

- Why Email Marketing A/B Testing Matters

- What Elements You Should Test in Email A/B Testing

- Step-by-Step Guide to Run an Email A/B Test

- Key Metrics to Measure in Email A/B Testing

- 5 Email A/B Testing Examples to Inspire Your Next Campaign

- How Email A/B Testing Fits into Your Optimization Journey

- Final Thoughts

- FAQs about Email A/B Testing

Every email marketer has faced the same question before hitting Send: Will this version actually perform better? A different subject line, a stronger CTA, or a new layout might increase opens and clicks, but without testing, it’s all guesswork.

That’s where email A/B testing comes in. By comparing two variations of the same email campaign, you can identify what truly resonates with your audience and continuously improve performance.

In this guide, you’ll learn how email A/B testing works, what elements you should test, real examples, and practical best practices to turn every campaign into a data-driven growth opportunity.

What Is Email A/B Testing

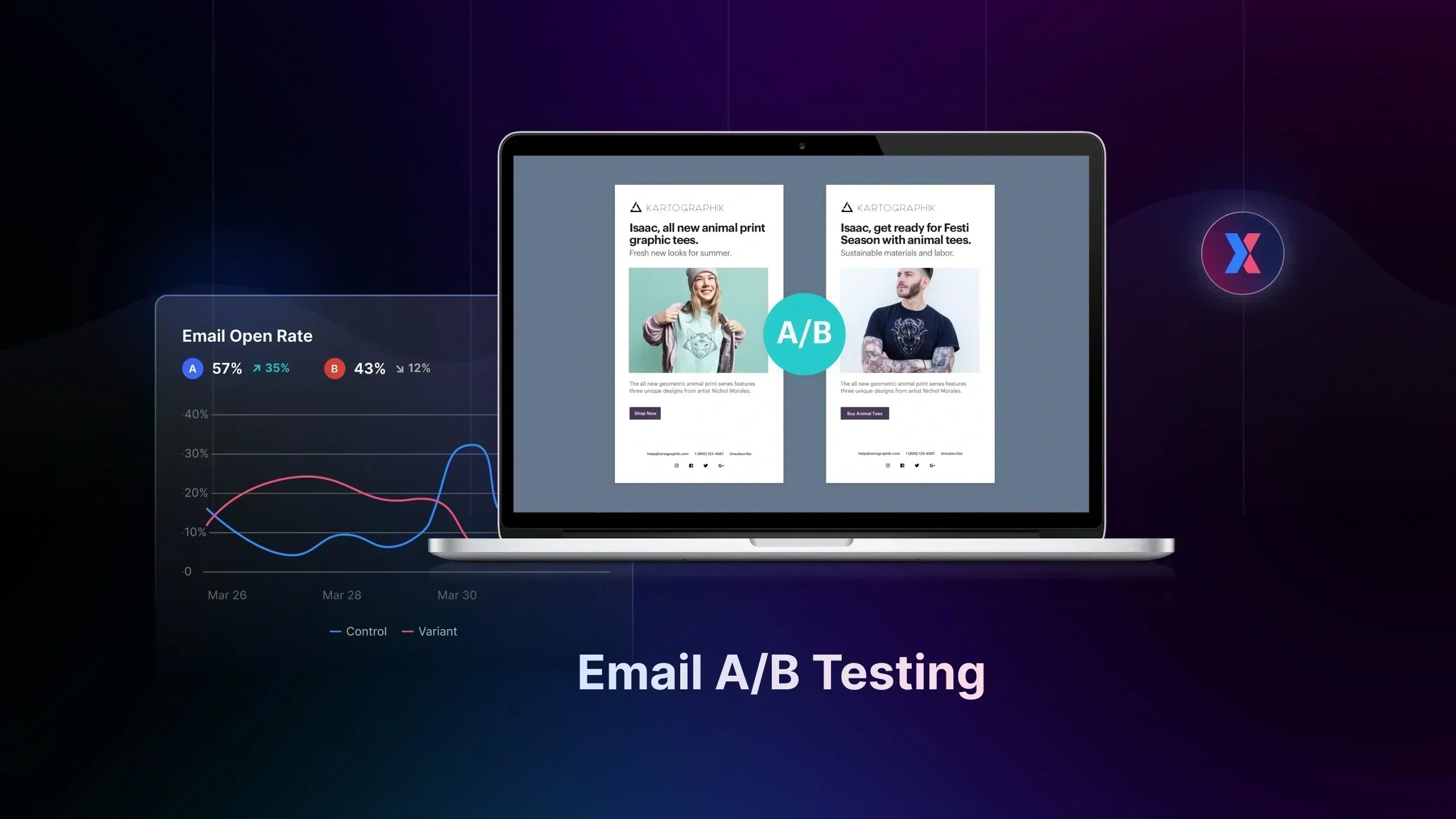

Email A/B testing (also called email marketing A/B testing or email split testing) is the process of sending two variations of the same email campaign to different segments of your audience to determine which version performs better. By comparing results such as open rates, click-through rates, or conversions, marketers can identify which email elements resonate most with subscribers.

In a typical email A/B test, one version of the email (Variant A) acts as the control, while the other version (Variant B) includes a single change, such as a different subject line, CTA, or email layout. Each version is sent to a randomly split portion of your email list, allowing you to measure which variation drives stronger engagement.

Source: Mida.so

For example, a marketer running email marketing A/B testing might test two subject lines for the same campaign:

-

Variant A: “Your 20% discount ends tonight”

-

Variant B: “Last chance to save 20% on your order”

Both emails are sent to separate groups of subscribers. After the campaign runs, the marketer analyzes performance metrics to see which subject line generated more opens or clicks.

This simple testing approach removes guesswork from email marketing. Instead of relying on assumptions, A/B testing email campaigns allows marketers to make data-driven decisions and continuously improve the effectiveness of their email strategy.

Why Email Marketing A/B Testing Matters

Even small changes can significantly impact how subscribers interact with your emails. Instead of relying on assumptions or generic best practices, email marketing A/B testing allows marketers to make decisions based on real user behavior and measurable results.

Improve Open Rates

Your subject line is the first thing subscribers see in their inbox. Through email A/B testing, marketers can experiment with different subject line formats, word choices, or personalization tactics to discover which version encourages more recipients to open the email.

Over time, these tests help identify patterns in subscriber behavior, allowing you to improve email open rates consistently.

Increase Click-Through Rates (CTR)

Getting subscribers to open an email is only the first step. The real goal of most campaigns is to drive clicks that lead to deeper engagement or conversions.

Email marketing A/B testing can help optimize the elements that influence click behavior, including:

-

CTA button wording

-

Button design or placement

-

Email layout and visual hierarchy

-

Content structure and messaging

By testing these elements systematically, marketers can identify which email design or messaging approach encourages subscribers to take action.

Drive More Conversions and Revenue

Ultimately, the success of A/B testing email marketing campaigns is measured by its impact on conversions. Whether your goal is generating sales, sign-ups, or product views, testing helps you refine the combination of elements that lead subscribers to complete the desired action.

For e-commerce brands, email A/B testing can reveal insights such as:

-

Which promotional messaging drives more purchases

-

Which product imagery attracts more clicks

-

Which discount presentation increases conversion rates

Small optimization gains across multiple campaigns can compound into meaningful increases in revenue over time.

Understand Subscriber Behavior

Beyond performance improvements, email A/B testing also helps marketers better understand their audience. Each test reveals insights about how subscribers respond to different messaging, designs, and timing strategies.

Source: Nebo Agency

These insights allow marketing teams to refine their broader communication strategy and create email campaigns that align more closely with subscriber preferences.

Key takeaway: Even minor changes discovered through email marketing A/B testing can produce measurable improvements. In many campaigns, optimizing a single element can increase engagement by 10–30% or more.

What Elements You Should Test in Email A/B Testing

One of the biggest advantages of email A/B testing is flexibility. Marketers can test many different elements within an email campaign to identify what drives better engagement.

However, when running email marketing A/B testing, it’s important to change only one element at a time. This ensures you can clearly determine which change caused the difference in performance.

Below are some of the most common elements tested in A/B testing email campaigns.

Subject Lines

The subject line is often the first element marketers test in email A/B testing because it has a direct impact on open rates.

Even small changes in wording or structure can influence whether subscribers open your email.

Common subject line tests include:

-

Question vs. Statement: “Ready for 20% off today?” vs. “Enjoy 20% off today”

-

Emoji vs. No emoji: “🔥 Flash Sale Starts Now” vs. “Flash Sale Starts Now”

-

Short vs. Longer subject lines

Because subject lines determine whether an email gets opened, optimizing them strategies,mail marketing A/B testing can quickly improve campaign performance.

Preview Text

Preview text (also called preheader text) appears next to the subject line in most inboxes. While many marketers overlook it, preview text plays a significant role in influencing open rates.

Source: FunnelKit

Through email A/B testing, you can experiment with different preview text strategies such as:

-

Reinforcing the subject line message

-

Adding urgency or exclusivity

-

Highlighting the main benefit of the email

For example:

-

Variant A: “Your 20% discount is waiting.”

-

Variant B: “Only 12 hours left to claim your discount.”

Testing preview text alongside subject lines is a common approach in email marketing A/B testing to increase email opens.

Email Copy and Messaging

Another common focus of A/B testing email marketing campaigns is the body content of the email.

Different writing styles or messaging strategies can influence how subscribers engage with the content.

Examples of copy tests include:

-

Short vs. Long email copy

-

Different tone of voice (such as Informational vs. Conversational)

-

Personalized vs. Generic messaging

-

Benefit-focused vs. Feature-focused copy

Testing these variations through email A/B testing helps marketers understand what type of messaging resonates most with their audience.

Images and Visual Elements

Visual content plays a key role in how subscribers perceive an email. Through email marketing A/B testing, marketers can experiment with different visual approaches to see which version drives higher engagement.

Examples of visual tests include:

-

Images vs. No images

-

Product photos vs. Lifestyle images

-

Static images vs. GIFs or animated visuals

For e-commerce brands in particular, testing visual elements with A/B testing email campaigns can reveal which imagery encourages more clicks or product interest.

Call-to-Action (CTA)

The call-to-action (CTA) is one of the most important components of any marketing email. It directs subscribers toward the next step, whether that’s visiting a product page or completing a purchase.

Because of its impact on clicks and conversions, the CTA is frequently tested in email A/B testing.

Common CTA tests include:

-

Button vs. Text link

-

Different CTA wording: “Shop Now” vs. “Get 20% Off Today”

-

CTA placement within the email

Example of a CTA button in email A/B Testing

Optimizing CTAs through email marketing A/B testing can significantly improve click-through rates.

Send Time and Day

Timing can also affect the performance of A/B testing email campaigns. Different audiences may respond better to emails sent at specific times or days.

Common timing tests include:

-

Morning vs. Evening send times

-

Weekday vs. Weekend campaigns

-

Different days of the week

By experimenting with timing through email A/B testing, marketers can identify when their audience is most likely to open and engage with emails.

Step-by-Step Guide to Run an Email A/B Test

A well-planned email marketing A/B testing process ensures your experiments generate insights you can confidently apply to future campaigns.

Follow these steps to run effective A/B testing for email marketing.

Step 1: Define Your Goal

Start by identifying what you want to improve in your email campaign. Every email A/B test should have a clear objective tied to a measurable metric.

Common goals include:

-

Increasing open rates

-

Improving click-through rates (CTR)

-

Driving more conversions or purchases

For example:

| Goal: Increase email open rates by optimizing the subject line. |

A clear objective ensures your email marketing A/B testing efforts stay focused and measurable.

Step 2: Create a Hypothesis

Before launching an A/B testing email campaign, form a hypothesis about what change might improve results.

You can use a template to generate a testable hypothesis

A simple hypothesis structure looks like this:

We believe changing [X] will improve [Y] because of [Z].

Example:

We believe adding urgency to the subject line will increase open rates because subscribers respond strongly to limited-time offers.

Creating a hypothesis helps ensure your email A/B testing is driven by reasoning rather than random experimentation.

Step 3: Test One Variable

To keep results reliable, change only one element in your email variation.

For example:

-

Subject line wording

-

CTA button text

-

Email layout

Testing multiple elements at once makes it difficult to determine what caused the change in performance. Keeping your email marketing A/B testing focused on a single variable produces clearer insights.

Step 4: Split Your Audience

Next, divide your email list into two comparable audience segments. Each group receives one version of the email.

For example:

-

Group A → receives Variant A

-

Group B → receives Variant B

A random split ensures your email A/B testing results reflect the email variation itself rather than differences in audience behavior.

Step 5: Run the Test and Collect Data

Once the email campaign is sent, allow the test to run long enough to gather meaningful engagement data.

During this phase of A/B testing email campaigns, monitor performance indicators such as:

-

Open rate

-

Click-through rate

-

Conversions

Avoid ending the test too early, as incomplete data may lead to incorrect conclusions.

Learn more: How Long Should You Run a Test to Ensure A Reliable Results

Step 6: Analyze the Results

After collecting enough data, compare the performance of both email variations and determine the winning version.

Ask questions such as:

-

Which version generated more opens?

-

Which version produced more clicks or conversions?

-

Did the change align with the original hypothesis?

Once a clear winner emerges, apply the winning element to future campaigns and continue iterating with new email marketing A/B tests.

Consistently repeating this process allows marketers to gradually refine their strategy and improve the overall performance of their email marketing campaigns.

Key Metrics to Measure in Email A/B Testing

Running email A/B testing is only useful if you measure the right outcomes. Tracking the correct metrics helps you determine which variation truly performs better and why.

Here are the key metrics marketers typically monitor when analyzing email marketing A/B testing results.

Open Rate

Open rate measures the percentage of recipients who open your email.

Source: Loopify

It is most relevant when testing elements that influence inbox engagement, such as subject lines, preview text, and sender name. If your email A/B test focuses on improving opens, this is usually the primary metric to evaluate.

Click-Through Rate (CTR)

Click-through rate (CTR) measures the percentage of recipients who click a link inside your email.

This metric is especially important when testing elements such as:

-

CTA buttons

-

Email layout

-

Content structure

-

Images or product placements

In many A/B testing email campaigns, CTR reveals how effectively your email content motivates readers to take action.

Click-to-Open Rate (CTOR)

Click-to-open rate (CTOR) measures how many people clicked after opening the email.

|

Formula: CTOR = Clicks ÷ Opens |

While open rate shows how attractive your subject line is, CTOR reveals how engaging the email content itself is. This metric is often used in email marketing A/B testing when evaluating copy, visuals, or CTAs.

Conversions

For many campaigns, the most important outcome of email A/B testing is conversions.

A conversion might include:

-

Completing a purchase

-

Signing up for a service

-

Downloading a resource

Tracking conversions helps determine which email variation actually drives business results, not just engagement.

Revenue per Email

For e-commerce brands, revenue per email is one of the most valuable metrics in A/B testing email marketing campaigns.

Source: Bluecore

It measures how much revenue each email generates on average, helping marketers understand which variation produces the highest financial impact.

Important note: Sometimes an email with a slightly lower open rate can still win an email A/B test if it ultimately drives more purchases.

5 Email A/B Testing Examples to Inspire Your Next Campaign

To understand the real impact of email A/B testing, it helps to look at practical examples. Many brands regularly run email marketing A/B testing experiments to improve open rates, click-through rates, and conversions.

#1. Test the Subject Line Test

Subject lines are one of the most commonly tested elements in email A/B testing because they directly influence open rates.

For example, HubSpot once tested personalized subject lines against generic ones.

-

Variant A: “Download Your Marketing Guide”

- Variant B: [user-name], download your marketing guide”

In many cases, personalization increases open rates because the message feels more relevant to the subscriber.

Even small wording changes can produce noticeable differences in open rates.

#2: CTA Button Optimization

Calls-to-action (CTAs) are critical for driving clicks, which is why many marketers run A/B testing email marketing experiments focused on CTA design.

For instance, Campaign Monitor tested the impact of CTA button design in their emails.

-

Variant A with Text link: “Read the guide”

- Variant B with CTA button: “Download the Guide”

The test revealed that the button-style CTA increased click-through rates by more than 25% compared to the text link.

Because the CTA directly affects user action, optimizing it through email marketing A/B testing can significantly increase engagement.

# 3: Send Time Optimization

Another common email A/B testing example involves determining the best time to send emails.

Different audiences check their inboxes at different times, so testing send schedules can reveal valuable insights.

For example, Mailchimp has reported that many brands experiment with:

-

Variant A: Email sent at 9:00 AM

- Variant B: Email sent at 7:00 PM

Some audiences engage more during working hours, while others are more responsive in the evening.

Through consistent email marketing A/B testing, marketers can identify patterns in subscriber behavior and schedule campaigns at the times when engagement is highest.

#4: Personalization in Email Content

Personalization is another popular experiment in A/B testing email campaigns.

For example, Amazon frequently personalizes product recommendations in their email campaigns.

A typical email A/B test might compare:

-

Variant A: Generic product promotion email

- Variant B: Personalized recommendations based on browsing history

Personalized emails often perform better because they align with the subscriber’s interests.

Other personalization tests in email marketing A/B testing include:

-

Using the subscriber’s first name in the subject line

-

Showing location-based offers

-

Highlighting previously viewed products

For e-commerce brands, personalization experiments can significantly improve both click-through rates and conversions.

#5: Images vs. Minimal Design

Visual design can also affect how readers engage with an email.

For example, SitePoint once tested including images in their newsletter.

-

Variant A: Newsletter with multiple images

- Variant B: Text-focused newsletter with minimal visuals

Interestingly, their test showed that the image-heavy version slightly reduced conversions, likely because the visuals distracted readers from the main content.

This example shows why email A/B testing is so important: what works for one brand may not work for another.

How Email A/B Testing Fits into Your Optimization Journey

Running email A/B testing is a powerful way to improve how subscribers engage with your campaigns. By testing subject lines, messaging, CTAs, and send timing, marketers can steadily increase open rates and click-through rates.

But email experiments only optimize one stage of the customer journey.

Once a subscriber clicks your email, their experience continues on your website, where the real conversion decisions happen.

A typical e-commerce journey looks like this:

|

(1) Email campaign ↓ (2) Landing page or product page ↓ (3) Browsing products ↓ (4) Checkout and purchase |

This means that even if your email marketing A/B testing successfully increases clicks, your results will still depend on what happens after users land on your store.

For example:

-

A better subject line can increase open rates

-

A stronger CTA can increase click-through rates

-

But the website experience determines whether visitors actually convert

That’s why high-performing marketing teams treat email A/B testing as one tactic within a broader experimentation strategy. Email experiments help drive more qualified traffic to your store.

The next step is optimizing the pages that visitors land on. And this is where GemX: CRO & A/B Testing joins and becomes an important part of the workflow.

With GemX, Shopify merchants can run A/B tests directly on their storefront, experimenting with page layouts, messaging, product sections, and conversion elements without complex development work. By combining email marketing A/B testing with on-site experiments, you can optimize the entire journey from inbox → click → purchase.

Final Thoughts

Email A/B testing is one of the most effective ways to improve the performance of your email marketing campaigns. By testing elements like subject lines, CTAs, content, and send timing, marketers can continuously refine their messaging and better understand what resonates with their audience.

By combining email marketing A/B testing with on-site experiments, you can optimize the entire customer journey, from inbox engagement to final purchase, and turn more campaign traffic into measurable revenue.

FAQs about Email A/B Testing

- Subject lines

- Preview text

- Email copy and messaging

- Images and visual layout

- Call-to-action (CTA) buttons

- Send time or day

- If you are testing subject lines, focus on open rate.

- If you are testing email content or CTAs, analyze click-through rate (CTR).

- If your goal is revenue, track conversions or revenue per email.